What are AI citations?

AI citations are small references or links that appear alongside AI-generated answers.

They point to the original sources from which the answer was curated or summarized.

AI citations help with transparency and allow users to verify where the information came from.

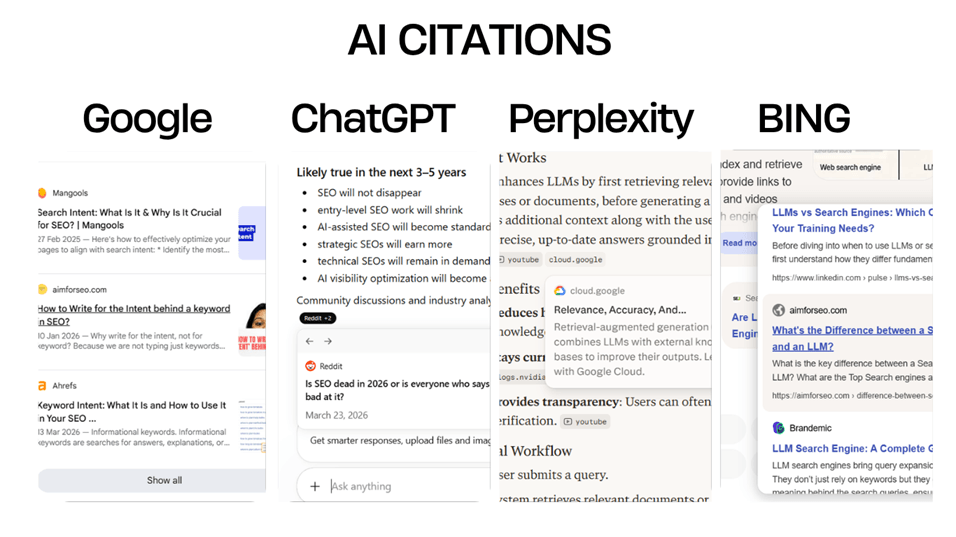

Platforms like Google, Microsoft Bing, Perplexity, and OpenAI ChatGPT provide citations to support the answers they generate.

The idea behind AI citations

In the early days of AI integration into search platforms, users mainly saw summarized answers and AI overviews.

AI systems were able to generate instant responses to almost every question, but this also raised concerns about reliability and trust.

ChatGPT started giving direct answers.

Google began showing summarized information through AI Overviews, but initially, there was limited visibility into where the information was actually sourced from.

Perplexity identified this gap. It not only provided instant answers but also displayed AI citations that linked back to the original sources.

Aravind Srinivas, the founder of Perplexity, mentioned in interviews that the idea was inspired by academic research practices from his college days, where research papers required proper references to support claims. A similar approach was implemented in Perplexity.

Later, other AI platforms also started adopting citations as part of their responses.

How are AI citations formed?

If an LLM generates responses purely from its trained knowledge, there is usually no direct source to cite.

This is because LLMs are trained on patterns in large amounts of text data – not on live web links, indexed pages, or structured references that can always be traced back precisely.

However, Google’s AI systems work differently in some cases.

Google can provide citations because its AI features have access to Google’s search index. Also, AI Overviews are not entirely generating answers independently; they often synthesize information from already indexed web pages.

Perplexity, on the other hand, works more like a combination of a search engine and an LLM.

However, LLMs themselves are not search engines and typically do not maintain a constantly updated index of the web. Retraining models frequently is also extremely expensive and resource-intensive.

This is where an approach called Retrieval-Augmented Generation (RAG) comes into the picture.

When an AI model’s internal knowledge is insufficient or outdated, RAG allows the system to retrieve information from external data sources in real time, without retraining the entire model.

The sources fetched during the RAG process are often the same sources that later appear as AI citations in the generated response.

Conclusion

When RAG comes into action, the AI system may retrieve information from web pages, news articles, live databases, PDFs, research papers, forums, videos, and other online content.

As a result, AI citations can point to many different types of sources, including websites, Reddit discussions, LinkedIn posts, and YouTube videos – depending on the query and which sources are considered most relevant and reliable for the context.

Here is more about the practical SEO strategy that works for the new search landscape.