What is RAG in LLM terminology?

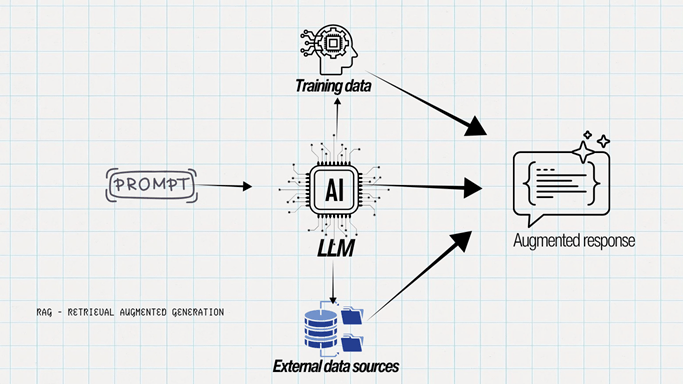

RAG (Retrieval-Augmented Generation) is one of the most effective approaches in LLM terminology for fetching up-to-date information from external sources before generating a response.

By default, LLMs generate responses using the knowledge they were trained on. However, training data alone is not always enough to answer every question accurately, especially when the query is outside the model’s training scope or involves recent information.

In such cases, an LLM may still generate a response, but with reduced accuracy. This can lead to false or fabricated information.

This happens because GPT models are primarily designed to generate text, not verify facts in real time. Although these models are periodically updated and retrained with newer datasets, those updates take time.

RAG helps bridge this gap. It connects the LLM to external data sources to retrieve fresh and relevant information before generating a response.

Once the information is retrieved, the LLM uses that context to generate a more accurate and informed answer – hence the term Retrieval-Augmented Generation.

What Does RAG Solve?

No need to retrain the model

These AI models have a knowledge cutoff, which means they are trained only on data available up to a certain point in time. For example, let’s say May 2026 is the latest knowledge cutoff.

Once we move beyond that period, the model would need retraining to learn new information, trends, and news. But retraining large models frequently is very expensive.

In the context of publicly available LLMs, RAG connects the model to external data sources to fetch fresh information before generating a response. This makes it a cost-effective approach, as the model can provide updated information without being retrained every time.

Reducing AI Hallucinations

AI hallucination often happens when an AI model lacks sufficient or reliable information. There are several other reasons too, such as vague prompts, biased training data, and fabricated or low-quality sources. However, incomplete context or missing knowledge is one of the primary reasons AI generates incorrect responses.

RAG (Retrieval-Augmented Generation) helps reduce hallucinations by enabling LLMs to retrieve up-to-date and relevant information from external sources such as the web, news, social media, and documents before generating a response.

Transparency for users

When RAG is triggered, it primarily fetches information from the web. It generates responses and also provides citations showing where the information was sourced from.

This helps users understand how the response was generated. They can also verify the information further by exploring the original sources.

Conclusion

When AI first became widely adopted, it faced several challenges such as hallucinations, inaccurate responses, and outdated knowledge. Over time, AI systems have continued to evolve by addressing these limitations step by step.

RAG is one such advancement that helps improve the accuracy and reliability of LLMs by connecting them with fresh and relevant external information before generating a response.